Self-Flying Drone Can Solve the Biggest Challenge UAVs Face

As the general public and mega corporations get excited about opportunities provided by Unmanned Aerial Systems or “drones”, there still is a major challenge to be solved by industry players. That challenge is how to teach drones to avoid hitting obstacles, dodge trees in a forest and in general have their own self-flying drone capabilities or “sense and avoid” intelligence. It would allow safe autonomous missions for delivery drones and provide an “idiot proof” flying experience for the many beginners out there, as well as restore some faith in the fear mongering media and the public that drones are not just good, but safe as well.

We have reported earlier about Skydio, a team of former Google Project Wing geeks attempting to create a self-flying drone backed by some of the most prestigious investors of Silicon Valley. They have landed a $3 million investment at the beginning of this year and hopefully they are working hard on their solution as there isn’t much to be found about their progress online.

In general, the team that succeeds in solving this problem will quickly come to dominate in the field and our prediction is that unless one of the major stake holders develops it, it will become ubiquitous and get adopted by the manufacturing side of the industry. One such team of MIT students and professors just gave a working example of how this can be done when they released a video of their completely stock fixed wing remote controlled aircraft flying at speeds of up to 30 MPH while autonomously dodging trees using software algorithms created by CSAIL PhD student Andrew Barry, who developed the system as part of his thesis with MIT professor Russ Tedrake.

The best part is that the software is open-source and available online for anyone wanting to toy with the technology.

“Everyone is building drones these days, but nobody knows how to get them to stop running into things,” says Barry. “Sensors like lidar are too heavy to put on small aircraft, and creating maps of the environment in advance isn’t practical. If we want drones that can fly quickly and navigate in the real world, we need better, faster algorithms.”

And the algorithms they have developed are in fact a lot faster, even up to 20 times faster then existing solutions because Barry’s realization was that, at the speeds that his drone could travel, the world simply does not change much between frames so he could get away with computing just a small subset of measurements at distances of 10 meters away. “You don’t have to know about anything that’s closer or further than that,” Barry says. “As you fly, you push that 10-meter horizon forward, and, as long as your first 10 meters are clear, you can build a full map of the world around you.”

Now his solution extracts depth information at a speed of 8.3 milliseconds per frame allowing the relatively high speed of 30MPH. Assuming that the pace of development in computing capacity remains at the current level, reaching even higher speeds is just a matter of time.

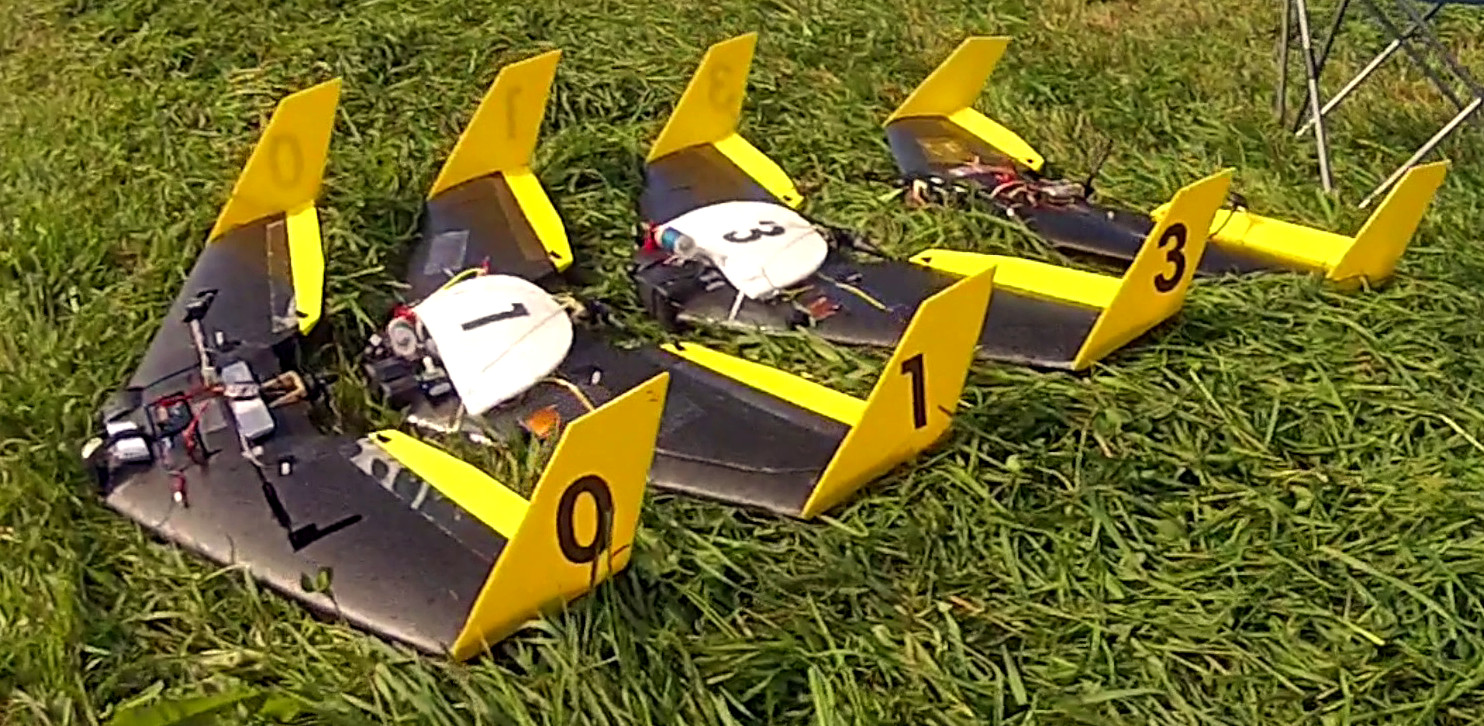

Barry and his team used an aircraft that weighs just over a pound and has a 34-inch wingspan costing about $1,700. And that investment includes the cameras on each wing and processors no fancier than the ones your cellphone probably already has.

The current solution they have showcased in the video above is still at the prototype phase and include “… occasional incorrect estimates known as ‘drift,…’” according to Barry. At the same time, I am sure you agree that – being part of college thesis project with very little funding -, once properly funded, this open source solution could be the first one to solve the challenge of enabling drones with senses making them smart enough to fly on their own in a safe way.

UPDATE:

Check out these exclusive pics from sent to us by Andrew just now!

DJI has already developed the collision avoidance system it is already available for sale on the Matrice airframe developers platform . Sounds like someone’s trying to reinvent the wheel without doing research .